Calling all extra-terrestrial tele-operation stereo-visual nerds! Here’s one you’ll love… We sat down with Dr. Geovanni Martinez, a staple figure at the University of Costa Rica’s (UCR) Image Processing and Computer Vision Research Laboratory (IPCS-Lab). Dr. Martinez uses Clearpath’s Husky to test his novel visual odemetry algorithm, which will take research to a whole new level… an outer-space level that is…

Goal of the research project

Over the past twenty years Mars exploration has typically been conducted by six-wheel rocker-bogie mobile rovers. If the rovers have any slip-up in navigation, it could lose the entire day of scientific activity – no pressure! To help the rovers overcome steep slopes, objects, and rough terrain, stereo visual odometry algorithms are used.

Dr. Martinez is using the Husky to implement and test their novel monocular visual odometry algorithm created to estimate the robot’s motion by evaluating the intensity differences at varying observation points between two intensity frames captured by a monocular video camera before and after the robot’s motion as an alternative to the traditional stereo visual odometry …following so far?

The rover’s motion will be estimated by maximizing the conditional probability of the frame to frame intensity differences at the observation points. The conditional probability is computed by expanding the intensity signal by a Taylor series and neglecting the nonlinear terms, resulting the well-known optical flow constraint, as well as using a linearized 3D observation point position transformation, which transforms the 3D position of an observation point before motion into its 3D position after motion given the rover’s motion parameters. Perspective projection of the observation points into the image plane and zero-mean Gaussian stochastic intensity errors at the observation points are also assumed.

How is this different from what is already out there?

Stereo visual odometry algorithms have already been implemented and tested with very promising results in applications including video compression and teleoperation of space robots. Dr. Martinez’s approach differs from traditional optical flow approaches because it doesn’t follow the typical two-stage algorithm. Instead, the one-stage maximum-likelihood estimation algorithm is based on the optical flow constraint equation, which is able to directly deliver the 3D rover’s motion parameters. This one stage algorithm is more reliable and accurate because it does not rely on two consecutive estimators to get the rover’s 3D motion.

Tell us about the goods!

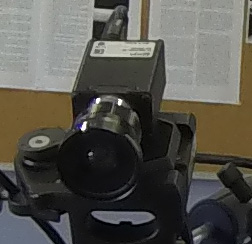

“Currently we are developing a real time image acquisition system consisting of three IEEE-1394 cameras, one looking downwards, the second looking backwards and the third looking leftwards. It is being developed under Ubuntu 12.04.2 LTS, ROS Fuerte and the programing language C,” commented Dr. Martinez.

The cameras are connected to the laptop onboard the Husky using a 3 Port PCMCIA IEEE 1394 FireWire 400 Laptop Adapter Card. During field tests only one of those three monocular cameras will be used for visual odometry. The image acquisition system corrects, in real time, the radial and tangential distortions due to the camera lens.

“After developing and testing the real time image acquisition system, we will begin with the implementation of our monocular visual odometry algorithm in the Husky,” said Dr. Martinez. Here the key challenges will be to make it run in real time and to make it robust enough to operate in real outdoors and indoor environments.

Why Husky?

Dr. Martinez explained, “The Robotic Operating System (ROS) driver for Husky A200 provided by Clearpath Robotics has been very helpful to us in two ways, which have saved us a lot of development time.”

1. The real time image acquisition system from the IEEE-1394 cameras (including lens distortion correction) has turned out easy to implement because of the ROS compatibility.

2. Commanding the Husky using the ROS driver was also very easy to implement.

“We like Husky because the software for image acquisition, and driving the robot, was easy to implement using ROS. It saved us a lot of development time. Additionally, it is strong enough to be driven in extreme environments.” – Dr. Martinez